Incident 273: FaceApp Predicted Different Genders for Similar User Photos with Slight Variations

Description: FaceApp’s algorithm was reported by a user to have predicted different genders for two mostly identical facial photos with only a slight difference in eyebrow thickness.

Entities

View all entitiesAlleged: FaceApp developed and deployed an AI system, which harmed FaceApp non-binary presenting users , FaceApp transgender users and FaceApp users.

Incident Stats

Incident ID

273

Report Count

1

Incident Date

2020-12-24

Editors

Khoa Lam

Incident Reports

Reports Timeline

twitter.com · 2020

- View the original report at its source

- View the report at the Internet Archive

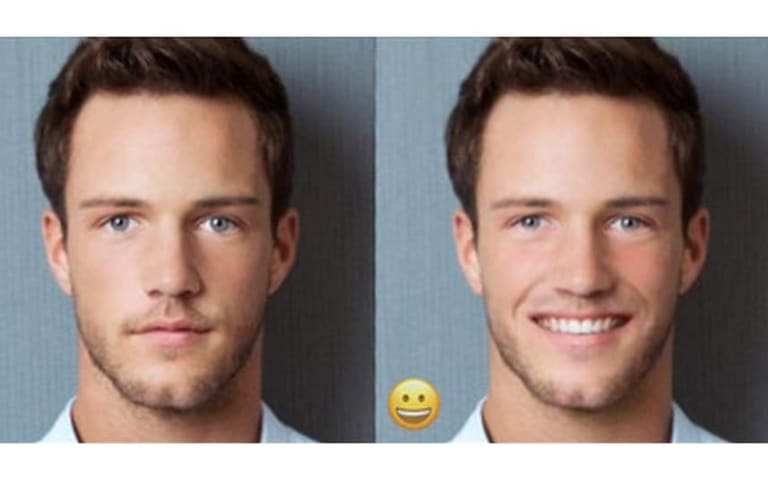

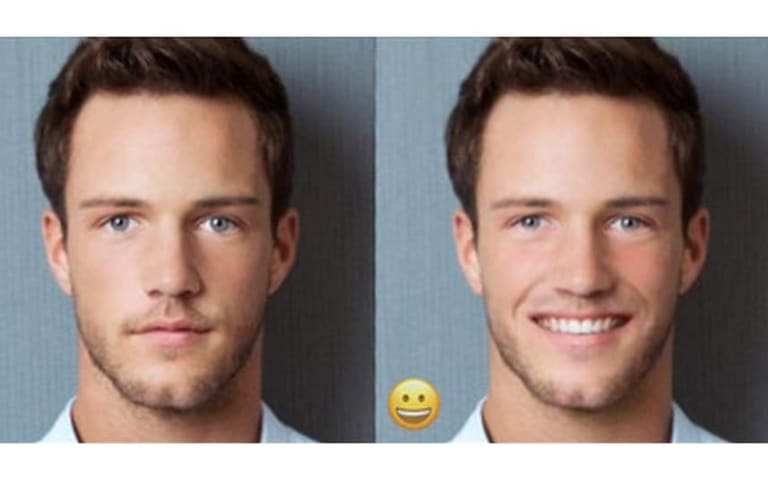

I’d like to talk a little bit about algorithms, dysphoria, and dysmorphia.

I’ve struggled with algorithms. I’ll often take a picture and run it through FaceApp to get gendered.

I recently noticed that when my eyebrows are thin, it says I am…

Variants

A "variant" is an incident that shares the same causative factors, produces similar harms, and involves the same intelligent systems as a known AI incident. Rather than index variants as entirely separate incidents, we list variations of incidents under the first similar incident submitted to the database. Unlike other submission types to the incident database, variants are not required to have reporting in evidence external to the Incident Database. Learn more from the research paper.

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Gender Biases of Google Image Search

· 11 reports

AI Beauty Judge Did Not Like Dark Skin

· 10 reports

FaceApp Racial Filters

· 23 reports

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Gender Biases of Google Image Search

· 11 reports

AI Beauty Judge Did Not Like Dark Skin

· 10 reports

FaceApp Racial Filters

· 23 reports